Contrarian take on agent complexity: building smaller, tighter AI agents beats feature-bloated ones in production

The Discipline Nobody Talks About When Building AI Agents

There is a specific kind of technical debt that only shows up in agent systems, and it doesn’t announce itself. It accumulates quietly, one reasonable decision at a time, until the day you’re staring at a system diagram that looks like a subway map and realizing you cannot explain what happens when tool call number four fails during a memory write.

I’ve been there. More than once.

The hardest part of building AI agents is not the model. It’s not the tooling, the prompting, or even the evals. It’s knowing when to stop.

How Complexity Sneaks In

It always starts the same way. You have a clear problem. You build a tight agent that solves it. The demo goes well. Someone asks, “Can it also handle X?” and you say yes, because X seems small. Then Y. Then a memory layer because stateless felt limiting. Then a retry loop because production is messy. Then a fallback model. Then a classifier to decide which fallback to trigger.

Six weeks later, nobody on the team fully understands the system. Including you.

In traditional software, we called this integration complexity. In agent systems, it compounds faster, because each new capability doesn’t just add to the system linearly. It interacts with every other capability in ways you didn’t model. The state space doesn’t grow, it explodes. Quietly, between sprints.

The Question That Cuts Through It

The discipline I’ve actually found useful is brutal and simple. Before adding anything to an agent, ask what breaks if you remove it instead.

Not “what could go wrong if we add this.” That question is too abstract. You’ll always find reasons to add things. The removal question forces specificity. If you can’t name exactly what breaks when you strip a capability out, you probably don’t need it yet.

This runs against how most engineering teams are wired. We’re trained to think in features, in additions. Subtraction feels like regression. But in agent systems, a lean agent you fully understand will outperform a capable one you can’t reason about, every single time.

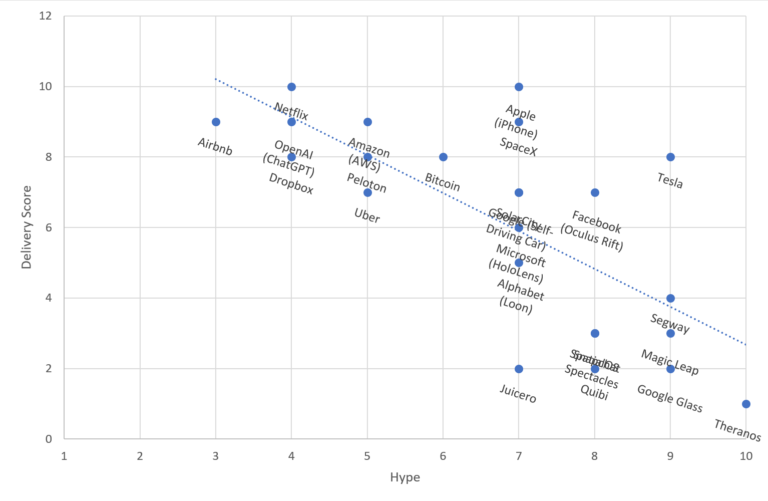

What the Certification Surge Is Actually Telling Us

Anthropic recently launched the Claude Certified Architect exam, and the adoption numbers are worth paying attention to. Accenture is training 30,000 people on Claude. Cognizant has rolled it out to 350,000 employees. Deloitte has opened access to 470,000 people. These are not small bets.

The exam itself is reportedly tough. 60 questions, two hours, proctored with webcam, no breaks. Participants say the agentic architecture and multi-agent orchestration sections are where people struggle most. That makes sense. Those are exactly the areas where complexity bites hardest in production.

What I find interesting is that the exam focuses on architecting systems that work in the real world, not on prompting or chat. The industry is signaling that production agent architecture is a distinct skill. I’d argue the most important part of that skill is restraint.

Why Smaller Wins in Production

A bloated agent is not just harder to debug. It’s harder to monitor, harder to test, and harder to hand off. When something goes wrong at 2am, you want a system a tired engineer can read end to end in ten minutes. You want a system where the failure mode is obvious, not emergent.

The agents I’ve seen perform best in production share a common trait. They do one thing, they do it well, and their failure behavior is predictable. You can compose narrow agents into larger workflows. You cannot easily decompose a monolithic agent that has grown organically over two months.

Karpathy has been saying for a while that AI agents are becoming the foundation of how software gets built going forward. He’s right. But foundations need to be load-bearing, not decorative. A foundation built from feature sprawl will crack under real-world load.

Where This Goes

The teams that will build durable agent systems are not the ones chasing capability counts. They’re the ones who treat complexity as a cost to be justified, not a feature to be celebrated. Every memory layer, every fallback, every classifier in your agent pipeline is technical debt until proven otherwise.

The field is maturing fast. The certification push, the enterprise adoption numbers, the growing body of production postmortems, all of it points toward an industry that is starting to learn what production actually demands. Smaller, tighter, and fully understood beats impressive and opaque. That’s not a philosophy. It’s what ships.

Sources & Further Reading

#AIEngineering #AgentDesign #MachineLearning #SoftwareArchitecture #LLMs