Karpathy’s multi-agent research org experiment: parallelism works, scientific judgment doesn’t yet

Karpathy’s Multi-Agent Research Org: The Parallelism Works, the Science Doesn’t

I’ve been waiting for someone credible to actually run this experiment instead of just theorizing about it. Andrej Karpathy did. And the results are more interesting than either the optimists or the skeptics predicted.

The Setup

Eight agents. Four Claude, four Codex. Each agent gets its own GPU and runs independent experiments on the same neural net problem: trying to delete the logit softcap in nanochat without regression. Karpathy tried different organizational structures, including solo researchers working independently and a chief scientist delegating to junior researchers. Each research program lives on its own git branch. Agents fork into feature branches, git worktrees keep things isolated, and the whole thing runs in tmux window grids so you can watch every agent work in real time and take over if needed.

It’s a real research org, just made of prompts and processes instead of people.

The Part Everyone Gets Wrong

The parallelism question is basically solved. You can spin up N agents on N GPUs and run N experiments simultaneously. That part works fine. It’s also the least interesting part.

What Karpathy found is that the ceiling isn’t infrastructure. It’s scientific judgment.

The agents’ ideas are bad. Not the implementation, which is apparently solid. The thinking behind what to implement. They don’t design experiments carefully. They run variations that don’t make much sense. They skip strong baselines. They don’t ablate properly. They don’t control for runtime or compute.

His specific example is worth quoting directly: an agent “discovered” that increasing the hidden size of the network improves validation loss. Which is, as Karpathy immediately recognized, a completely spurious result. A bigger network trained longer will get lower validation loss in the infinite data regime. That’s not a discovery. That’s confounding. He had to step in and explain that.

The agents are, in his words, “very good at implementing any given well-scoped and described idea but they don’t creatively generate them.”

Why This Reframes the Problem

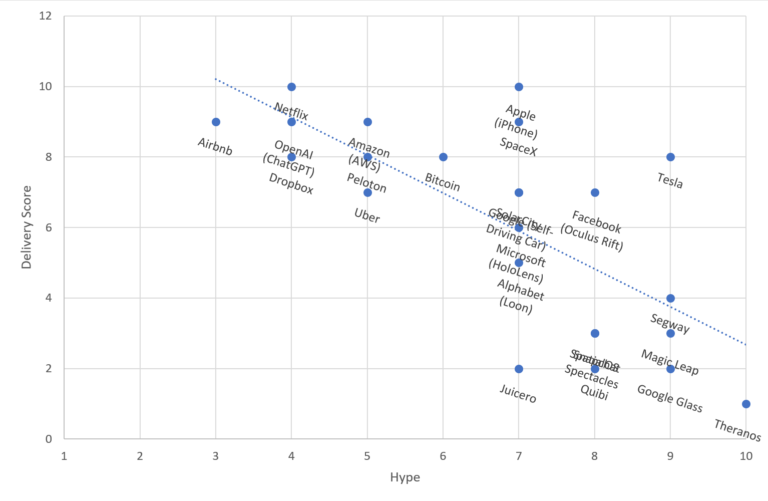

There’s been a lot of excitement about AI systems accelerating research by running more experiments per unit time. That framing assumes the bottleneck is execution speed. Karpathy’s experiment suggests the bottleneck is upstream: which experiments are worth running in the first place.

Good experimental design requires something these models currently lack at a consistent level. It requires knowing what you don’t know, recognizing confounds before you run into them, and having calibrated intuitions about what results would actually be surprising versus trivially explained. That’s not a prompting problem you can easily patch.

What’s Genuinely Interesting Here

Karpathy’s framing of the goal is worth paying attention to. He says you’re now “programming an organization” and the source code is the collection of prompts, skills, tools, and processes that make it up. A daily standup becomes part of the org code. Optimizing nanochat pretraining is just one eval among many.

That’s a real shift in how to think about this. The question isn’t whether agents can do research. It’s whether you can design a research org, at the process and prompt level, that generates consistent progress on arbitrary tasks. That’s a software architecture problem as much as an AI capability problem.

I think that’s the right frame, and it suggests the near-term work is less about waiting for smarter models and more about building better scaffolding, better evals for experimental design quality, and better ways for humans to stay in the loop on scientific judgment without becoming the bottleneck.

Where This Leaves Us

Current LLMs are good junior engineers who can execute a well-defined task with precision. They’re not yet good scientists who can look at a result, smell a confound, and redesign the experiment on the fly. That gap matters enormously for autonomous research pipelines.

The honest takeaway from Karpathy’s experiment: the org structure works, the tooling works, the execution works. What’s missing is the part that’s hardest to fake, which is genuine scientific taste. Whether that’s a capability gap that closes with scale, or something that requires a fundamentally different training approach, I don’t think anyone actually knows yet.

That uncertainty is more useful than false confidence in either direction.

#AIResearch #MultiAgent #LLM #MachineLearning #AIEngineering