Microsoft open sources BitNet: 100B parameter LLM running on a single CPU via 1.58-bit ternary weights

Microsoft just open sourced BitNet, and I think people are sleeping on how significant this actually is. While most of the AI conversation stays locked on GPU clusters, foundation model releases, and cloud API pricing, something quietly landed that could change the entire equation for how and where large language models actually run.

Let me explain why this matters more than the headlines suggest.

BitNet is an inference framework that runs a 100 billion parameter LLM on a single CPU. Not a server rack. Not a cloud instance with a hefty hourly rate. A CPU. The kind sitting inside the laptop you’re reading this on right now. The trick is deceptively simple: instead of storing model weights as 32-bit or 16-bit floating point numbers, BitNet uses 1.58-bit ternary weights. Every weight in the model is one of three values — negative one, zero, or positive one. That’s it. And because your CPU was already built to handle integer operations natively, the math becomes fast, efficient, and genuinely practical without specialized hardware.

The numbers are hard to dismiss once you actually sit with them. Five to seven tokens per second on a single CPU for a 100 billion parameter model. Up to six times faster than llama.cpp on x86 hardware. Energy consumption drops by 82 percent. Memory footprint shrinks by 16 to 32 times compared to full precision models. And accuracy? It barely moves. You’re not trading a Ferrari for a bicycle here. You’re trading a Formula One race car for something that can actually drive on normal roads.

Why the Quantization Framing Misses the Point

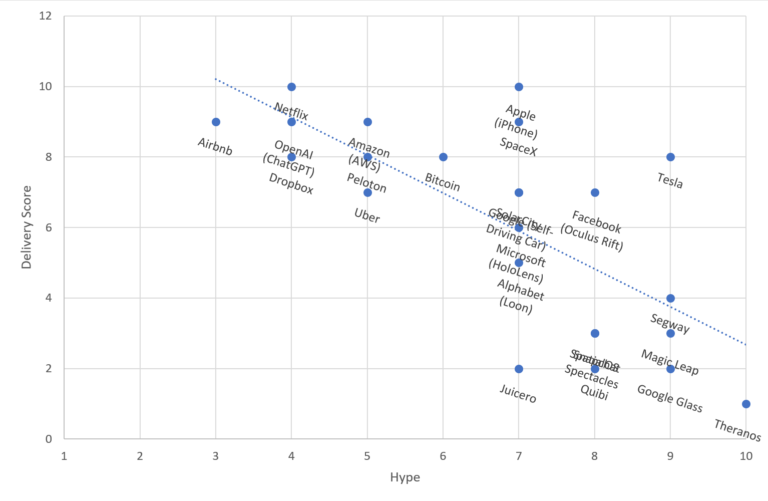

A lot of people will hear “1.58-bit weights” and immediately file this under aggressive quantization — a compression trick with quality tradeoffs you learn to tolerate. That framing undersells what’s happening. BitNet isn’t compressing a full-precision model after training. The architecture is trained with ternary weights from the start. The model learns to represent knowledge within that constraint rather than having precision squeezed out of it afterward. That architectural difference is why the accuracy holds up in a way that traditional aggressive quantization never quite managed convincingly at this scale.

I’ve spent a lot of time thinking about the practical implications of running large models locally, and the wall has always been the same: hardware cost and energy cost. Running a 100B parameter model through a standard stack required either significant GPU memory, a cloud bill that adds up fast, or painful compromises in model capability. BitNet doesn’t just nudge those constraints — it removes them entirely for a huge range of real-world use cases.

What This Opens Up That Wasn’t Possible Before

Think about the deployment scenarios that suddenly become viable. Edge devices. Air-gapped environments. Healthcare applications where data never leaves the building. Developers in regions where cloud compute is expensive or unreliable. Small businesses that want genuine AI capability without signing up for recurring infrastructure costs. Researchers running experiments without burning through compute budgets. The consumer laptop as a legitimate inference machine for serious workloads.

Open sourcing BitNet means the community now gets to push this forward. People will build on it, optimize it further, and find applications Microsoft’s team hasn’t thought of yet. The combination of an accessible weight format, CPU-native performance, and a permissive release is the kind of thing that quietly restructures an ecosystem over the next few years.

The GPU-dependent, cloud-tethered model of AI deployment has always carried an implicit assumption that serious capability required serious hardware. BitNet challenges that assumption directly, at 100 billion parameters, running on hardware everyone already owns. That’s not a footnote in the AI story this year. That’s a chapter.