Contrarian take on context window size vs. context quality in LLM coding tools, using Claude Code’s Auto Dream feature as a jumping-off point

The Context Window Arms Race Is Solving the Wrong Problem

Everyone in AI tooling is chasing bigger context windows. Gemini 1.5 Pro hit 1 million tokens. Claude’s window sits at 200k. The marketing around these numbers is relentless, as if raw capacity is the thing standing between developers and a perfect AI coding session.

It isn’t.

The Real Problem Nobody Talks About

Here’s what actually happens in a long coding session with any AI assistant. You start fresh, the model is sharp, it knows your constraints, it remembers what you told it about your architecture. Two hours later, it’s contradicting itself. It suggests code that reintroduces a bug you both agreed was fixed forty minutes ago. It forgets the naming convention you established at the start. It just gets worse.

This isn’t a model quality issue. It’s a physics issue. You are filling a finite container, and not all the content going into that container is equally useful. The conversation history accumulates fast. Every back-and-forth, every code block, every clarification, every dead end you explored and abandoned, it all sits in that window eating up space and attention. The model isn’t choosing what to pay attention to based on what matters to you. It’s working with a flat, chronological dump of everything.

Bigger windows just delay the problem. They don’t solve it.

Auto Dream Is an Honest Acknowledgment of This

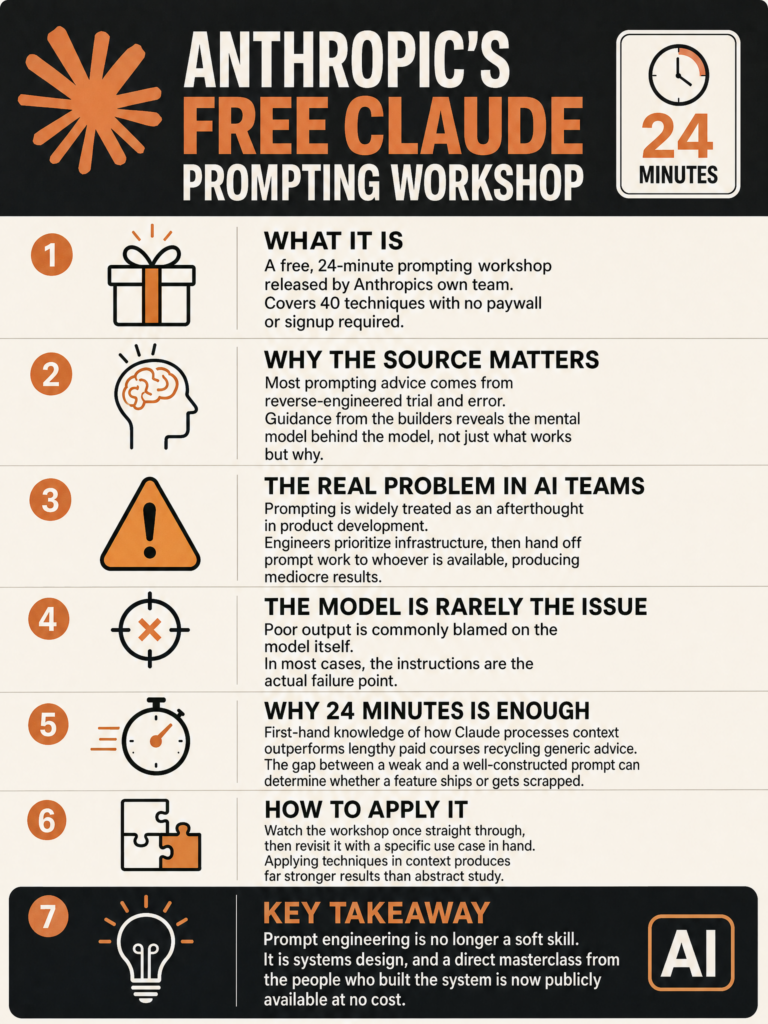

Anthropic is quietly rolling out a feature called Auto Dream for Claude Code. The idea, as spotted by Min Choi on March 27th (https://x.com/minchoi/status/2037581064080646545), is that Claude periodically compresses and cleans its working memory mid-session to prevent degradation as context accumulates.

I think this is the right instinct. It is an admission that the infinite-context fantasy doesn’t hold up in practice, and that quality of context matters more than raw quantity. That is an intellectually honest position.

But compression is still a workaround. The deeper question is whether we’re curating what goes into the window in the first place, not just managing the mess after it accumulates.

Quality Over Quantity

When I build AI-powered tools, the most reliable performance gains I see don’t come from giving the model more context. They come from giving it better context. Structured, relevant, concise. Strip out the conversational filler. Summarize resolved threads. Keep the active constraints visible and the dead ends invisible.

Andrej Karpathy made a related observation recently when discussing his app-building experience. The code itself wasn’t the hard part. The surrounding complexity was. The same principle applies here. The tokens you’re burning on conversational history and abandoned approaches are tokens not being spent on the current problem.

The model can only attend to so much, and attention isn’t evenly distributed across a 200k token window.

What Good Context Management Actually Looks Like

The tools that will win this space aren’t the ones with the biggest windows. They’re the ones with the best memory architecture. That means things like persistent project memory that survives session resets, structured separation between constraints, history, and active task state, and something like Auto Dream but proactive rather than reactive, deciding what to compress before it degrades performance rather than after.

Some teams are already building toward this. The agentic coding tools that treat memory as a first-class engineering problem, not an afterthought, are the ones producing consistent results on long-running tasks.

Context window size is a spec sheet number. Context quality is what you feel at hour three of a debugging session.

Where This Goes

Auto Dream is a step. It won’t be the last one. The next few years of AI coding tools will probably be defined less by model capability and more by memory architecture. How does the tool decide what to keep? What to compress? What to surface when you return to a task after a week away?

Those are hard problems. They require real design thinking about how developers actually work, not just how much text a model can technically process at once.

The context window arms race gave us bigger boxes. What we needed was a smarter way to pack them.

Sources

#AIEngineering #ClaudeCode #LLMs #SoftwareDevelopment #AITools