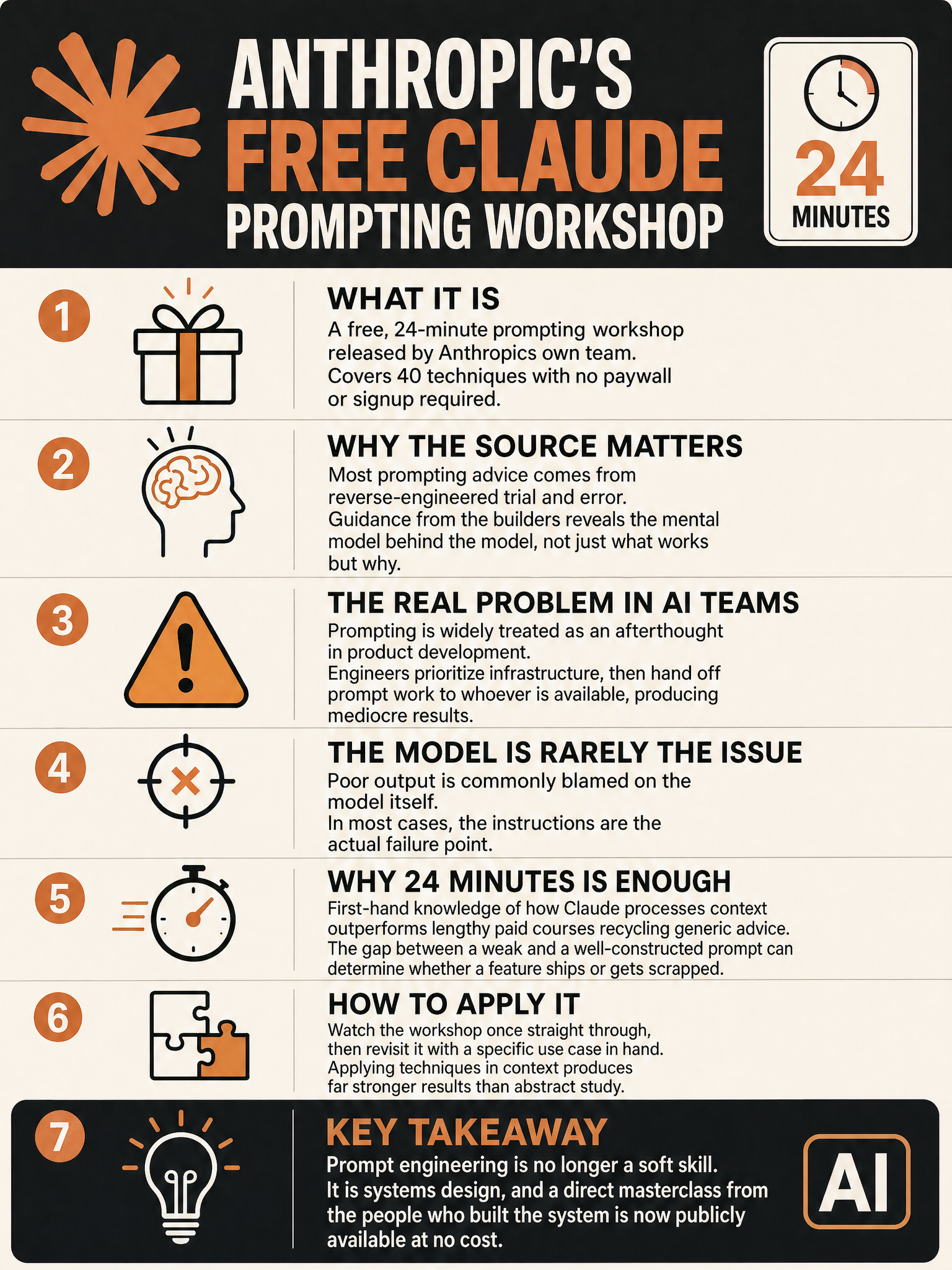

Anthropic releases free 24-minute prompting workshop with 40 techniques taught by the Claude team

Anthropic Just Released a Free Prompting Workshop. Pay Attention.

Most prompting advice you find online was written by people who poked at a model until something worked, then published it. Some of that advice is genuinely useful. A lot of it is pattern-matching dressed up as insight. When Anthropic’s own team releases a 24-minute workshop covering 40 techniques, that is a fundamentally different thing.

This is not a third-party reverse-engineering job. This is the people who designed Claude telling you how they intended it to be used.

That distinction matters more than most people seem to realize right now.

The Source Problem in Prompt Engineering

There is a recurring credibility issue in the prompting world. Anyone can write a thread, package it as a “masterclass,” and charge for it. The market has been flooded with this for two years. Some authors have real experience. Many are just faster at reposting.

What makes Anthropic’s workshop different is provenance. The techniques being taught come from the team that built the model’s training process, designed its behavior, and has the most direct line of sight into why certain prompting patterns work while others fall apart. You are not getting empirical guesses. You are getting the mental model behind the model.

That is not a small thing.

40 Techniques, No Paywall

The workshop is 24 minutes long and covers 40 distinct techniques. No subscription. No upsell. Just the material, free, from the source.

I want to be direct about what that number means in practice. Forty techniques in 24 minutes is a fast pace. This is not a course designed to hand-hold you through basics. It assumes you are already using Claude and want to use it better. If you go in expecting a gentle introduction, you will probably miss half of it.

Watch it twice. Take notes the first time around.

The Real Problem This Solves

I keep running into the same situation inside teams that are actually shipping AI products. The infrastructure gets built carefully. The model gets deployed. Then someone writes a prompt in 10 minutes and the team wonders why outputs are inconsistent.

Prompting is treated as the easy part. It is not.

The gap between a team that treats prompting as engineering discipline and one that treats it as vibes is visible immediately in output quality and in how much time gets spent debugging behavior that could have been specified correctly upfront.

A workshop like this gives teams a shared vocabulary and a shared set of practices. That alone is worth the 24 minutes.

Why “From the Builders” Is a Different Category

There is a version of this post where I say something polite about the abundance of good prompting resources available. I am not going to do that.

Most of what circulates is noise. The signal-to-noise ratio in prompt engineering content is genuinely bad, and the reason is that it costs nothing to publish techniques that sound plausible but have no grounding in how these systems actually work internally.

When Anthropic publishes this, they are accountable to it in a way no third-party author is. If a technique they teach produces bad results, that reflects directly on their model and their team. That accountability changes the quality of what gets published.

What You Should Actually Do With This

Watch the workshop. Bring your team if you have one. Treat the 40 techniques not as a checklist but as a framework for thinking about how language models process instructions.

Then go back to whatever prompts you are currently running in production and ask honestly whether they reflect any of this thinking. Most will not.

The upgrade is not in knowing the techniques. It is in developing the habit of applying them before you ship something, not after you notice the outputs are wrong.

Anthropic made the barrier to entry zero. There is no excuse left for treating prompting as an afterthought.

Sources

#AIEngineering #PromptEngineering #Claude #Anthropic #MachineLearning #GenerativeAI