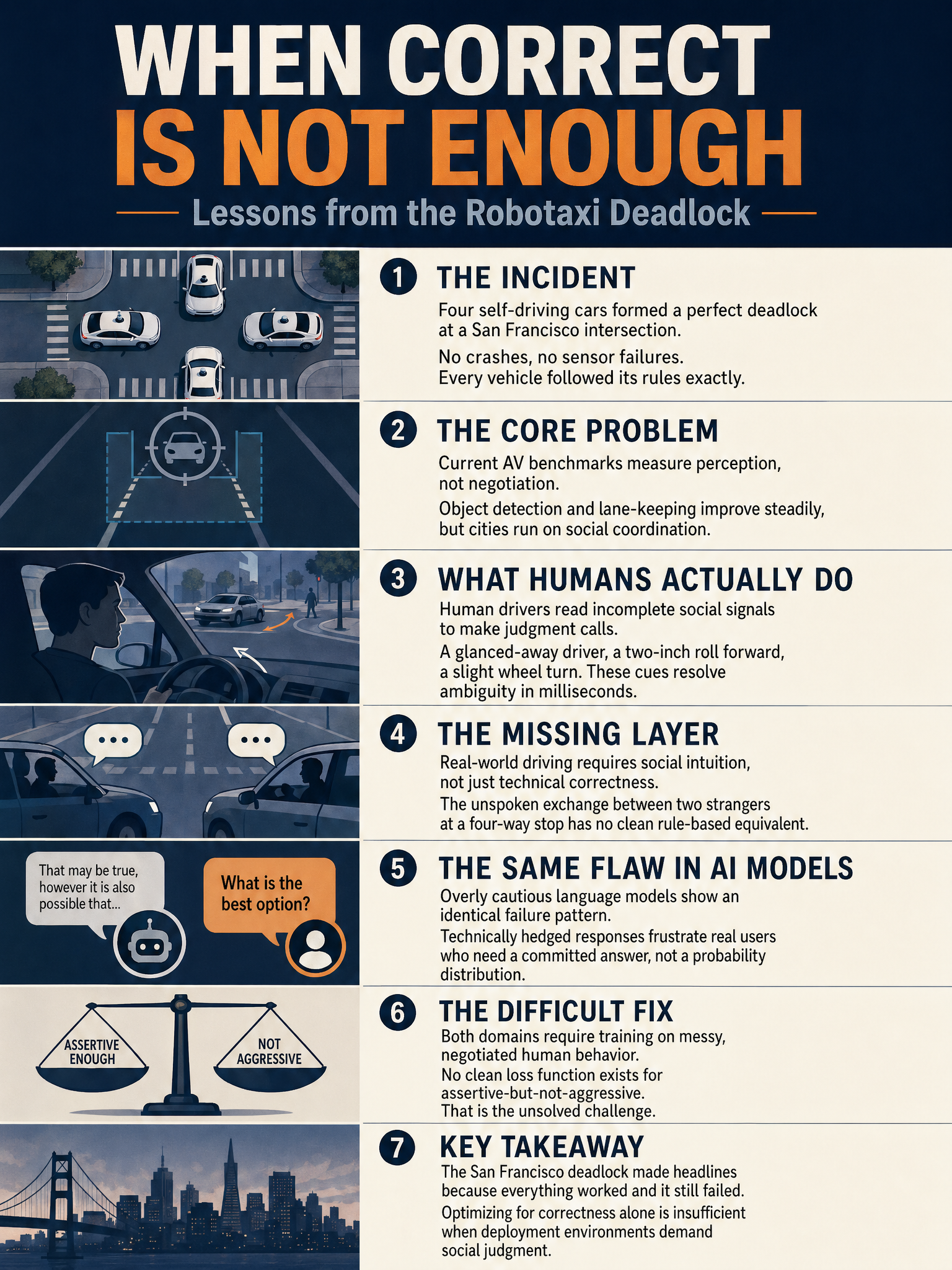

Robotaxi deadlock in San Francisco reveals gap between technical correctness and social judgment in autonomous systems

The Politeness Problem: When Self-Driving Cars Are Too Correct

Something quietly embarrassing happened at a San Francisco intersection recently, and I think it exposes a flaw in how the entire autonomous vehicle industry thinks about the problem it’s solving.

Several robotaxis arrived at the same intersection and got stuck. Not because of sensor failures. Not because of weather or software crashes. They got stuck because every single vehicle was doing exactly what it was designed to do. Yielding politely. Waiting for a clear path. Following the rules with machine precision.

Perfect behavior. Total paralysis.

The Benchmark Problem

Here’s what bothers me most about this incident. The AV industry measures progress almost entirely through perception metrics: object detection accuracy, lane-keeping performance, reaction time to obstacles. Waymo and others have published genuinely impressive numbers on these dimensions, and those improvements matter. Nobody wants a car that can’t see a pedestrian.

But the deadlock had nothing to do with perception. Every car could see everything perfectly. The problem was that none of them could make a social decision. They couldn’t read the room.

Cities don’t run on perception. They run on negotiation. And right now, we don’t have a good benchmark for that.

What Human Drivers Actually Do

Think about what actually happens at a four-way stop with ambiguous timing. A human driver makes eye contact. Or they inch forward slightly, a half-second of movement that says “I’m going.” Another driver catches that signal and waits. The whole exchange takes maybe 800 milliseconds and nobody can fully articulate the rules they followed.

That micro-negotiation is load-bearing infrastructure for urban traffic. It’s not a bug in human driving. It’s a feature we built cities around. And it’s completely invisible to a system trained to optimize for correctness.

A robotaxi that waits for full certainty before proceeding will always lose that negotiation to a human who’s comfortable with 70% certainty and a little assertiveness. Put four robotaxis together with no humans to anchor the negotiation, and you get the deadlock.

Why This Is a Hard ML Problem

I want to be precise about where the difficulty actually lives, because “teach the car to be more assertive” sounds simple and isn’t.

The car doesn’t just need to learn when to yield and when to proceed. It needs to model other agents’ internal states from behavioral signals. It needs to predict whether that slight forward movement from the car to its left is an intentional go-signal or sensor noise. It needs to understand that the right move sometimes is the slightly irrational one, the move that breaks symmetry and gets traffic flowing.

That’s a fundamentally different problem than detecting a stop sign. It’s closer to the theory of mind problem in cognitive science, applied in real time at 30 frames per second.

Current reinforcement learning approaches optimize heavily for safety, which means they’re trained to be conservative under uncertainty. That’s the correct engineering choice in most situations. But conservatism and social assertiveness are in direct tension, and nobody has cleanly solved how to balance them in mixed human-machine traffic.

What Comes Next

I don’t think this is fatal for autonomous vehicles. But I do think it points toward where the next decade of serious research has to go.

Perception is largely a solved problem at the benchmark level. The gains from here are diminishing. Social reasoning under uncertainty is nowhere near solved, and it’s arguably the harder problem because the ground truth is ambiguous by design.

The deadlock wasn’t a failure of technology. It was a reminder that technical correctness and practical judgment are different things, and we’ve been measuring one while assuming the other would follow. It won’t.

Until AV systems can navigate the informal protocols that humans use to coordinate in real time, they’re going to keep having moments where being right makes them useless. That’s a design problem worth taking seriously.

Sources

#AutonomousVehicles #MachineLearning #AIEngineering #SelfDrivingCars #RoboticSystems