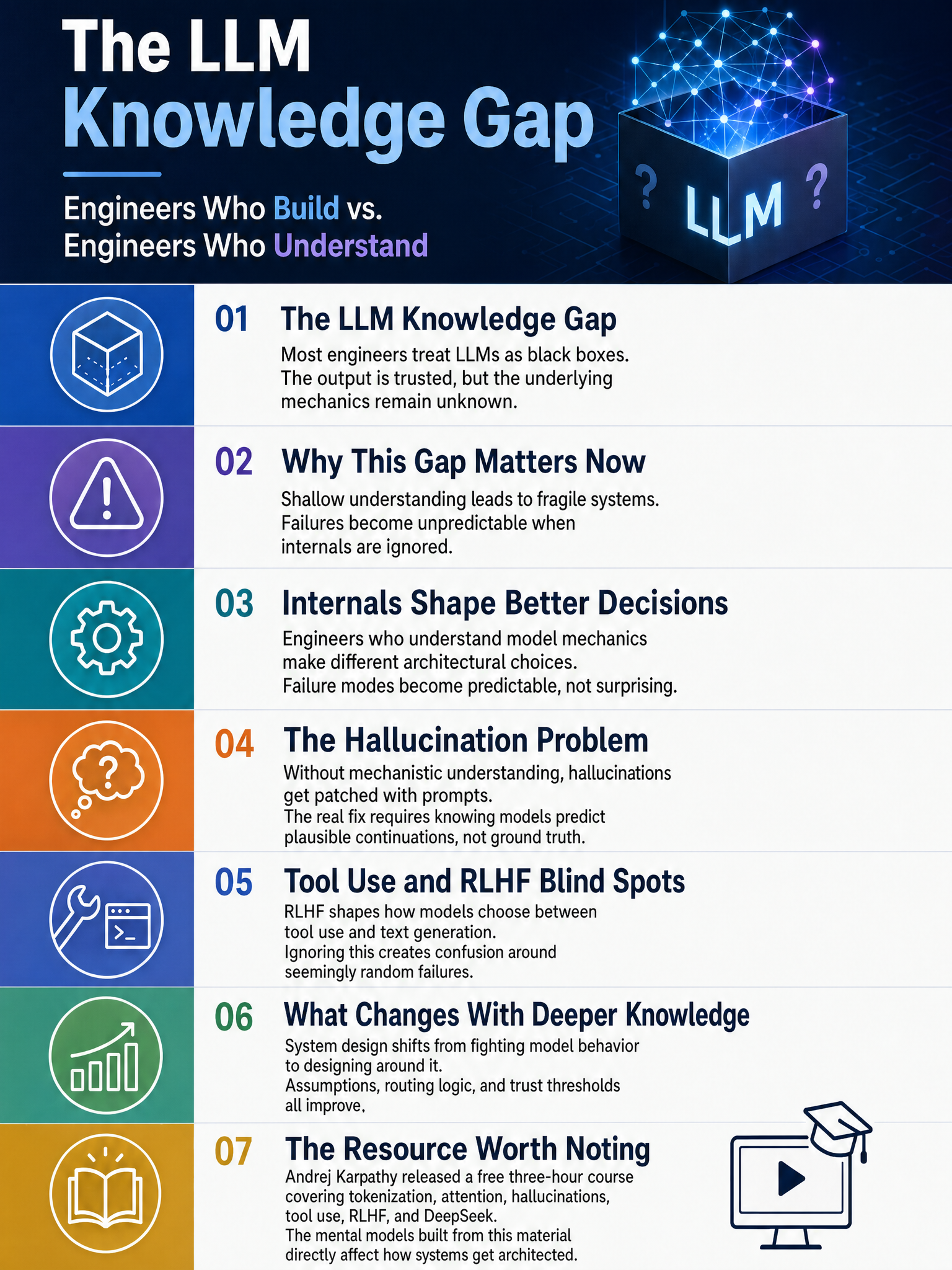

Insight on why understanding LLM internals changes how engineers architect AI systems, prompted by Karpathy’s new course

The Black Box Problem: Why LLM Internals Should Change How You Build

Most engineers treating LLMs like black boxes are building on sand. That sounds harsh, but I’ve watched it happen enough times to say it plainly. The system works, demos great, ships to production, and then fails in ways that feel random but absolutely are not. The failure was baked in from the start, and it was predictable if you understood what was actually happening inside the model.

Andrej Karpathy just dropped a free 3-hour course that covers tokenization, attention, hallucinations, tool use, RLHF, and more. It covers everything you’d need to stop treating these models as magic and start treating them as engineering systems with known properties and known failure modes. I think every engineer building on top of LLMs should watch it. Not to become a researcher. To become a better architect.

Why Black Boxes Break at the Worst Time

The problem is not that engineers don’t care. It’s that the abstraction layer is so good that curiosity never gets triggered until something goes wrong. The API returns text, the text looks right, and you move on.

But tokenization alone can wreck you if you don’t think about it. The way a model chunks input affects retrieval, affects reasoning over structured data, affects how arithmetic gets handled. If you’re doing anything with numbers, code, or non-English text and you’ve never looked at how your input actually tokenizes, you’re flying blind. That’s not a prompt engineering problem. That’s a system design problem.

RLHF is where it gets even more interesting. The model’s behavior is shaped by a fine-tuning process built on human preferences, and those preferences are not always what you want for your use case. A model that has been rewarded for sounding confident and helpful will sound confident and helpful even when it’s wrong. Understanding that dynamic changes how you think about validation layers, about when to add human-in-the-loop checks, about which parts of a pipeline you can actually trust.

The Architectural Gap Karpathy Is Actually Filling

One quote from the discussion around this course hit me: “The gap between engineers who understand this and engineers who don’t isn’t technical depth. It’s the ability to conceive of entirely different things.”

That is exactly right. I’ve seen it play out. Engineers who understand attention mechanisms think differently about context window management. They don’t just stuff the window and hope, they reason about what the model is actually doing with that context, where attention will degrade, and how to structure inputs to preserve signal. Engineers who understand how RLHF shapes behavior can anticipate systematic biases in model output rather than just discovering them in production.

These are not marginal improvements. These are different classes of systems.

What Good Looks Like

The multi-agent Polymarket setup floating around on Twitter this week is a good example of this thinking applied in practice. One engineer built four agents with strict separation: a scanner, a brain, an executor, and an exit watcher. The scanner kills 93% of markets before any reasoning happens. The brain requires three out of four internal checks to agree before proceeding. This is not a “let the LLM figure it out” architecture. This is someone who thought carefully about where model judgment can be trusted and where it needs to be constrained by structure. That comes from understanding how the model actually reasons, not from treating it as an oracle.

⚙️

Where to Start

Watch Karpathy’s course. It is 3 hours and it is free. It covers the ground that matters: tokenization, attention, RLHF, hallucinations as a structural property rather than a bug, tool use, and how systems like DeepSeek were actually built. The Stanford lecture that has also been circulating covers similar ground at a different angle, and both are worth your time.

Then go back and look at something you’ve built. Ask yourself where you made assumptions about model behavior that you can’t defend mechanically. You’ll find at least one place where understanding the internals would have changed the design.

The engineers who get this right aren’t the ones who prompt more cleverly. They’re the ones who stopped treating the model as a black box with good PR and started treating it as a system they’re responsible for understanding.

That shift is not optional anymore. It’s what separates systems that hold up from systems that don’t.

Sources

#AI #MachineLearning #LLM #SoftwareEngineering #AIEngineering