Nvidia releases Nemotron-3 Super 120B MoE model built for AI agents, open source

Nvidia Just Changed the Agent Hardware Equation

Most open source model releases are variations on a theme. Bigger parameter count, slightly different training mix, a new benchmark to wave around. Nemotron-3 Super 49B… sorry, 120B is not that. This one actually made me stop and think about what teams building internal AI agents are going to look like in 12 months.

Let me explain why.

The Architecture Is the Story

120 billion total parameters. 12 billion active at inference time. That gap is the whole point.

Mixture of Experts means the model routes each token through a small subset of specialized sub-networks rather than lighting up the entire parameter space on every pass. The result is that you get reasoning capacity that scales with 120B of trained knowledge, but your actual compute bill at inference time reflects something closer to a 12B dense model.

That is a meaningful distinction if you are paying cloud bills or speccing out on-premise hardware for an agent deployment. A well-configured workstation can run this. You are not requisitioning a rack of A100s.

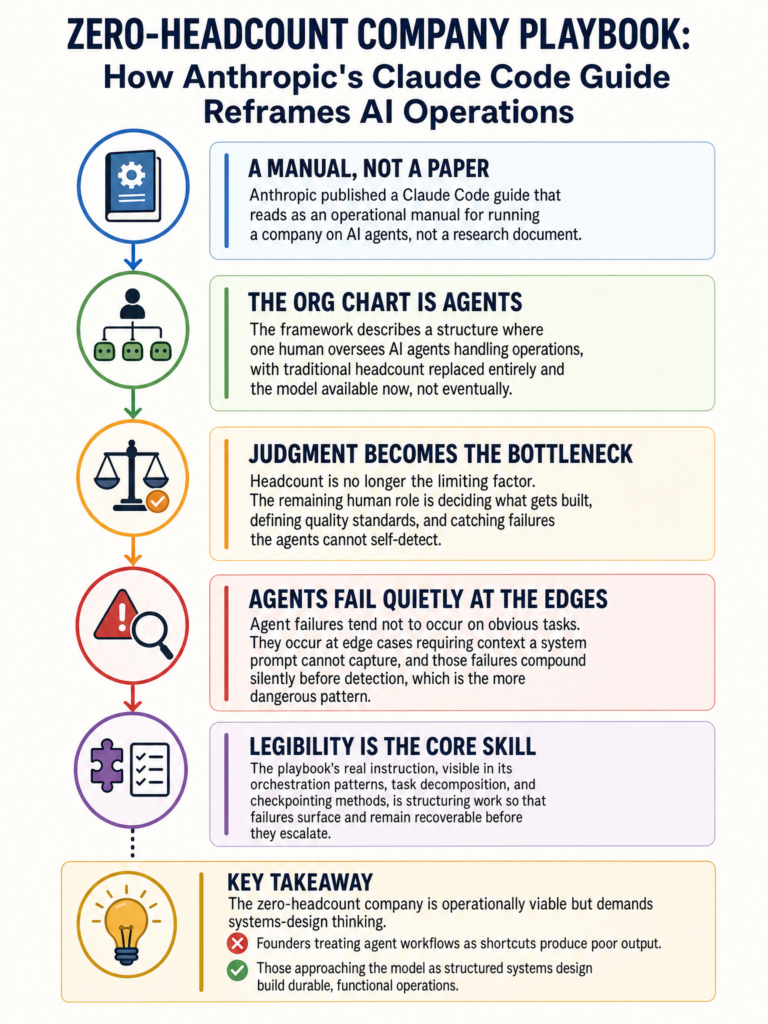

Built for Agents, Not Retrofitted

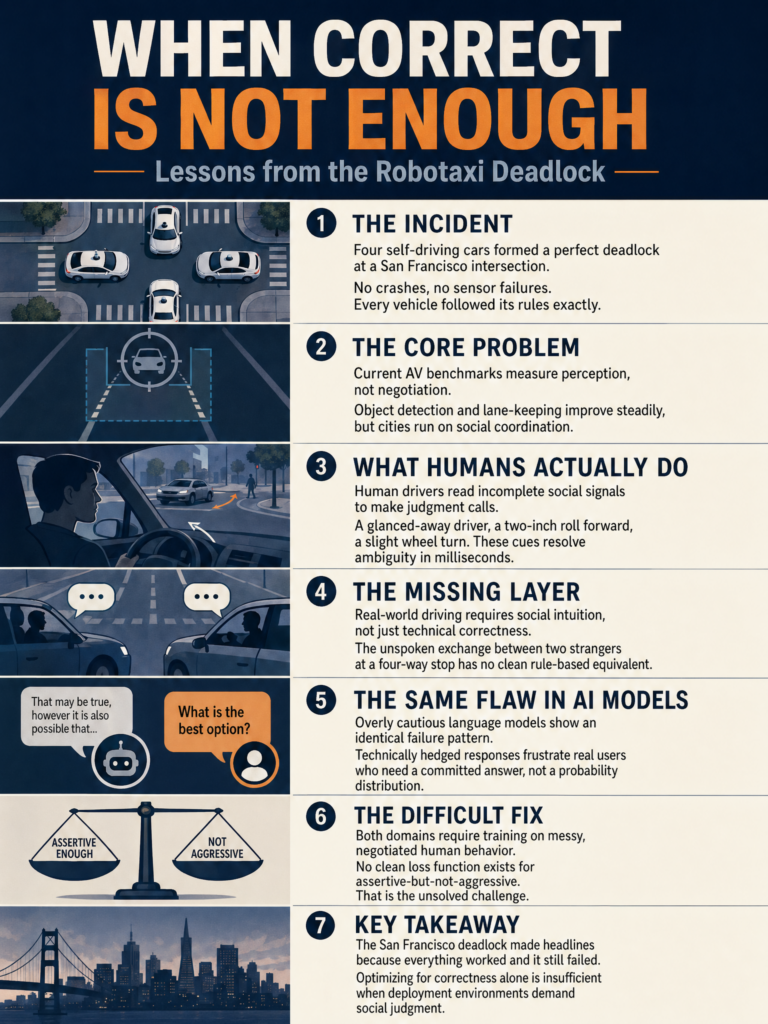

This is where I think most coverage is missing the real point.

The dominant pattern for agentic LLM deployments right now is: take a general-purpose model, add a system prompt, bolt on a tool-use framework, and hope it holds together across multi-step tasks. It often does not, and the failure modes are annoying to debug.

Nvidia built Nemotron-3 Super with agentic workloads as the primary design target. Tool use, multi-step reasoning, task delegation. These were first-class concerns during training, not afterthoughts layered on top. That starting point produces different behavior under the kinds of conditions where agents actually break: long context chains, ambiguous handoffs between subtasks, tool call errors that require recovery.

I have not run extensive evals on it yet, but the architectural choice alone tells me Nvidia thought carefully about where the real failure surface is in production agent systems.

Open Source Changes the Math

The model is open source. That matters beyond the obvious cost angle.

Teams can audit the model behavior. They can fine-tune on internal data without sending that data through a third-party API. They can deploy it entirely behind their own network perimeter. For enterprises building agents that touch sensitive internal systems, those are not nice-to-haves.

Min Choi on X noted the release was less than 24 hours old when he was already walking through full setup and workflow demonstrations (https://x.com/minchoi/status/2033216384159690805). The community picked this up fast. When a model is open, that feedback loop is immediate and visible in ways that closed API models simply cannot match.

Who This Is Actually For

If you are a solo developer building a personal assistant, this is probably more model than you need. If you are an engineering team deploying agents across internal tools, data pipelines, or customer-facing workflows where you need predictable multi-step behavior at reasonable cost, this is exactly the right profile.

The 12B active parameter count means you can run multiple instances in parallel without exotic infrastructure. That matters for agentic systems where you want concurrency across tasks rather than one agent waiting on another.

Where I Think This Lands

The agent runtime problem has always had two hard constraints: model capability and inference cost. For a long time those pulled in opposite directions. Bigger models were more capable but expensive. Smaller models were cheap but broke on complex tasks.

MoE architectures like this one are a real answer to that tension, not a workaround. Nemotron-3 Super is Nvidia putting its weight behind the idea that the agent deployment era needs models designed specifically for it, not general models pressed into service.

That is a bet I think pays off. The next generation of internal AI tooling is going to be built on models like this, not on API calls to frontier models that cost a dollar per thousand tokens and route your data through someone else’s servers.

Keep an eye on what the fine-tuning community does with this over the next few months. That is where the real signal will emerge.

Sources

#NvidiaAI #Nemotron #AIAgents #OpenSource #MixtureOfExperts #LLM #MachineLearning #MLEngineering