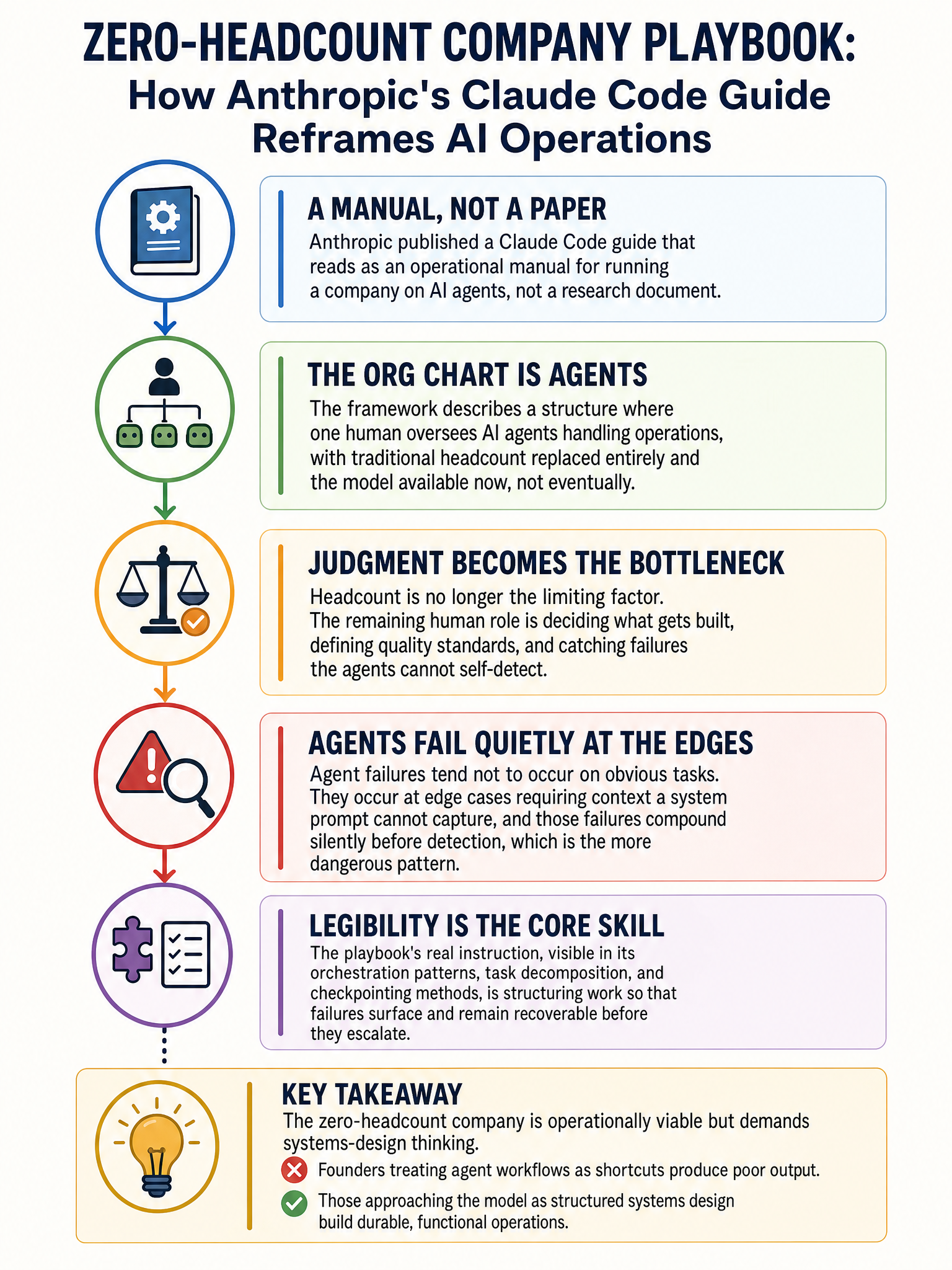

Anthropic releases Claude Code operational playbook for running AI-agent-first companies

Anthropic Just Published an Operational Manual for the Agent-First Company

I’ve read a lot of AI research papers, product announcements, and thought leadership posts over the past few years. Most of them describe a future. Anthropic’s Claude Code best practices document describes a present. That distinction matters more than people are giving it credit for.

The document at https://www.anthropic.com/news/claude-code-best-practices is not a capabilities demo. It’s not a whitepaper. It reads like a founder’s internal wiki for running a company where the workforce is mostly agents and the human at the top is making judgment calls, not doing the actual execution work. That is a structurally different thing than anything a frontier lab has published before.

🔧 What the Playbook Actually Says

The core thesis is simple: one human operator can run what used to require a full team, if that operator knows how to structure agent workflows, manage context, and set up the right evaluation loops. Claude Code handles the execution. The human handles the taste.

That’s not a metaphor. Anthropic is telling founders, step by step, how to build a company where the org chart is almost entirely agents. Not in 2030. Now.

The bottleneck has shifted from headcount to judgment. Someone still has to decide what gets built, what quality looks like, and when the agent has gone off the rails. That’s the remaining human job. It’s a real job. But it’s one job, not twenty.

Why This Is Different From “AI Will Replace Jobs”

The “AI will replace jobs” conversation has been happening for a decade and mostly produced anxiety, not operational change. This document is different because it’s prescriptive and specific. It tells you how to set up parallel agent workflows, how to manage context windows across long tasks, how to write evaluation criteria so you know when output is good enough to ship.

That’s an instruction set. And instruction sets get copied.

The real implication is not that companies will shrink. Some will. The more important implication is that the cost of starting a company has dropped by an order of magnitude for anyone willing to learn how to manage agents well. A solo founder with strong judgment can now cover ground that previously required a seed-stage team.

🤖 The Darker Use Cases Are Already Live

While Anthropic published the responsible operational guide, the market immediately demonstrated where this technology actually goes in the wild. One widely circulated account described a 21-year-old using Claude Code, Flux for image generation, and ElevenLabs for voice synthesis to build a fictional OnlyFans persona named Maya. The operation ran on four markdown files and pulled in $43,000 in its first month. 1,247 paying subscribers. No camera. No team. No real person.

I’m not celebrating that. I’m pointing at it because it tells you something true about the gap between “how this technology is designed to be used” and how it actually gets deployed when you hand capable tools to a million different people with a million different goals.

The same agent orchestration logic that Anthropic wants you to use to run a lean software company works just as well for something entirely different. The playbook is neutral. The applications are not.

What Builders Should Actually Take Away

The founders who benefit most from this shift will not be the ones who automate the most aggressively. They’ll be the ones who figure out what still requires human judgment and protect that part of the workflow.

Agent systems make mistakes. They drift. They optimize for the wrong thing when the evaluation criteria are fuzzy. Someone has to catch that. The operational skill being described in Anthropic’s playbook is less about prompting and more about system design: how do you structure work so that errors are caught before they compound?

That’s an engineering problem. It’s also a product sense problem. The founders who treat it as both will build something durable. The ones who just turn agents loose and check back later will find out what “compounding errors” means the hard way.

The zero-headcount company is not a thought experiment anymore. It’s a business model with an operational manual behind it. Whether that’s good news depends entirely on what you plan to build with it.

Sources

#ClaudeCode #AIAgents #ArtificialIntelligence #Startups #FutureOfWork #Anthropic #MachineLearning