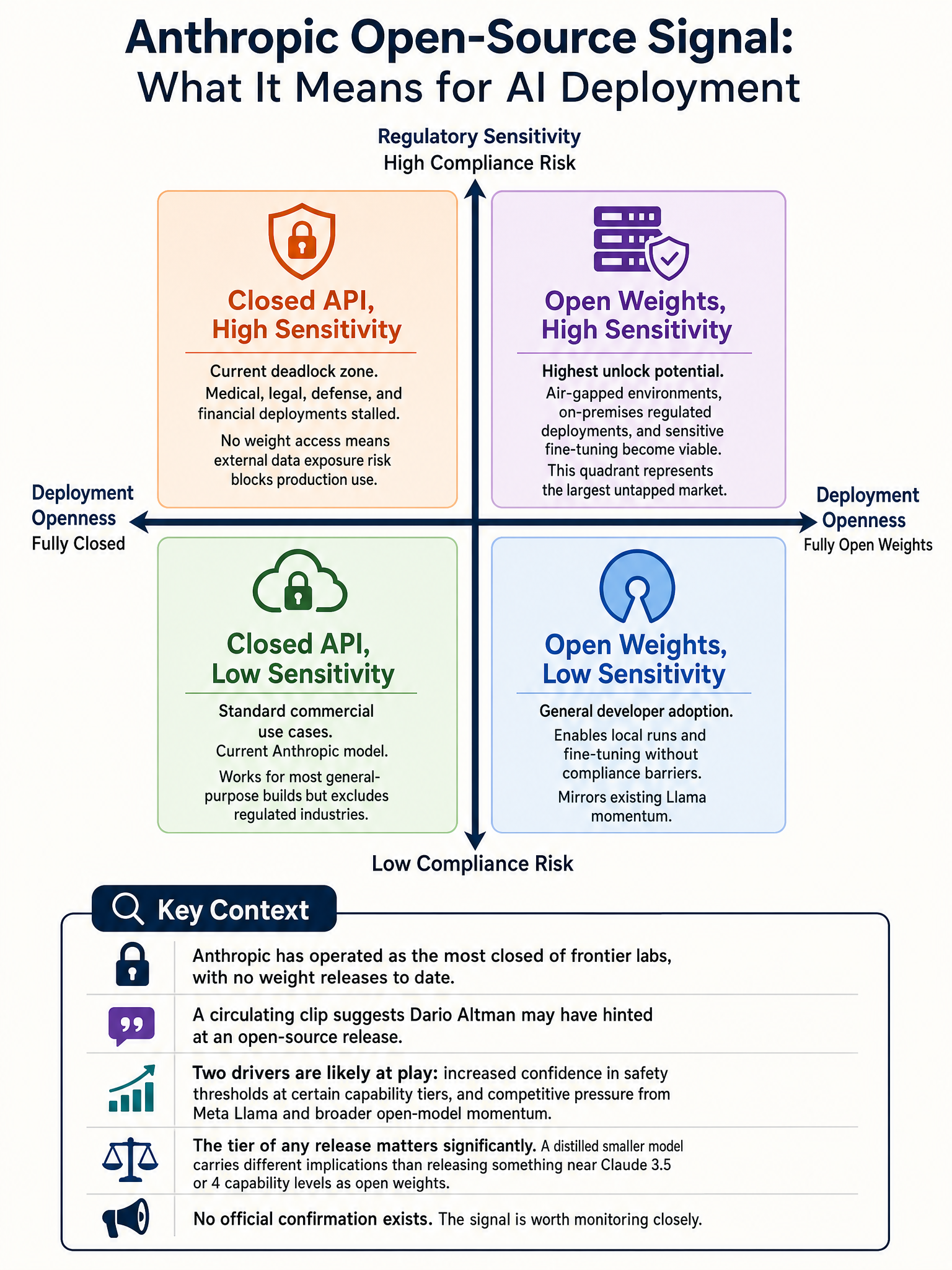

Rumors of Anthropic releasing an open-source model, what it would mean for the AI ecosystem

Anthropic and Open Source: Reading Between the Lines on a Very Big Rumor

Something is shifting at Anthropic, and the AI community is paying attention.

A short video clip circulating on X shows Dario Amodei apparently reacting to a post about open-source AI winning. The original tweet from Ahmad (@TheAhmadOsman) reads simply: “Contrary to the recent popular narrative, Opensource AI will win.” The quote-tweet response: “Dario might be showing her Anthropic’s upcoming opensource release boys.”

That’s thin as evidence goes. A video clip, an inference, a lot of reading between the lines. But the reaction was immediate, and the speculation is worth taking seriously. Because if Anthropic does release open weights, it is one of the more significant strategic reversals in recent AI history.

Why Anthropic Is the One That Matters

Meta releasing Llama models is expected at this point. Google has released open models. Mistral built its brand on it. But Anthropic has been the holdout, almost conspicuously so.

Their entire public identity has been built around safety-first, controlled deployment. No weights. No local runs. You access Claude through the API, on their infrastructure, under their terms. That is not an accident. It is a deliberate philosophical stance that releasing powerful model weights into the wild introduces risks that cannot be walked back.

For Dario to hint at an open-source release, even obliquely, means something has changed in that internal calculus.

Two Possible Readings

The first reading is that Anthropic has gotten comfortable enough with a particular capability tier. Maybe the model they’d release is not Claude 3.7 Sonnet or anything near their frontier. Maybe it’s a smaller, older model where they’ve assessed the risk profile and decided open weights are acceptable. This would be consistent with their stated safety framework. You release what you trust.

The second reading is less flattering. Competitive pressure is real. Meta’s Llama releases have eaten into the developer mindshare that Anthropic might otherwise have captured. The open-source ecosystem has momentum that closed APIs cannot fully compete with. Developers want to run things locally. They want fine-tuning control. They want to avoid API costs at scale. If Anthropic stays fully closed while the rest of the market moves, they risk becoming irrelevant to an entire segment of builders.

My honest read is that it is probably both. Safety considerations and business pressure are not mutually exclusive. The question is which one is doing more of the driving.

What It Would Actually Change

The practical impact would be significant for anyone building on top of AI infrastructure.

Right now, if you want the best reasoning performance without paying per-token at scale, your open-weight options are Llama models and a handful of others. An Anthropic open-weight model, even a smaller one, would immediately become one of the most scrutinized releases in the space, given the quality bar Claude has set in benchmarks and real-world use.

It would also change the fine-tuning conversation. Claude’s instruction following and tone have become something developers genuinely prefer. Putting that in a locally-runnable package would matter.

🔬

What I’m Watching For

The clip alone proves nothing. Dario could have been showing someone anything. The inference chain from “he smiled at an open-source tweet” to “Anthropic is releasing weights” is long.

But the timing is interesting. The AI developer ecosystem is fracturing between closed API dependency and open infrastructure. Companies building serious products are increasingly wary of being locked into a single provider’s pricing and uptime decisions. An Anthropic open model, even a mid-tier one, would be a genuine answer to that anxiety.

If this rumor materializes, I will be watching the model tier they choose to release. That choice will tell you more about their actual safety reasoning than any policy document they’ve published.

A controlled open release is not a contradiction for a safety-focused lab. It might actually be the more honest position: we trust this capability level, we do not yet trust the next one. That framing, if they can articulate it clearly, is something the field needs more of.

Sources

#Anthropic #OpenSource #AIStrategy #LLM #MachineLearning #GenerativeAI