China backs orbital data center startup with $8.4 billion in credit lines, signaling a new front in the AI compute race

China Just Bet $8.4 Billion That the Future of AI Compute Is in Orbit

Most of the AI compute conversation right now is about H100 allocations, inference costs per token, and who has the largest training cluster. That’s a real fight. But China just signaled that it’s also playing a completely different game, one that most people in the Western AI world aren’t even watching.

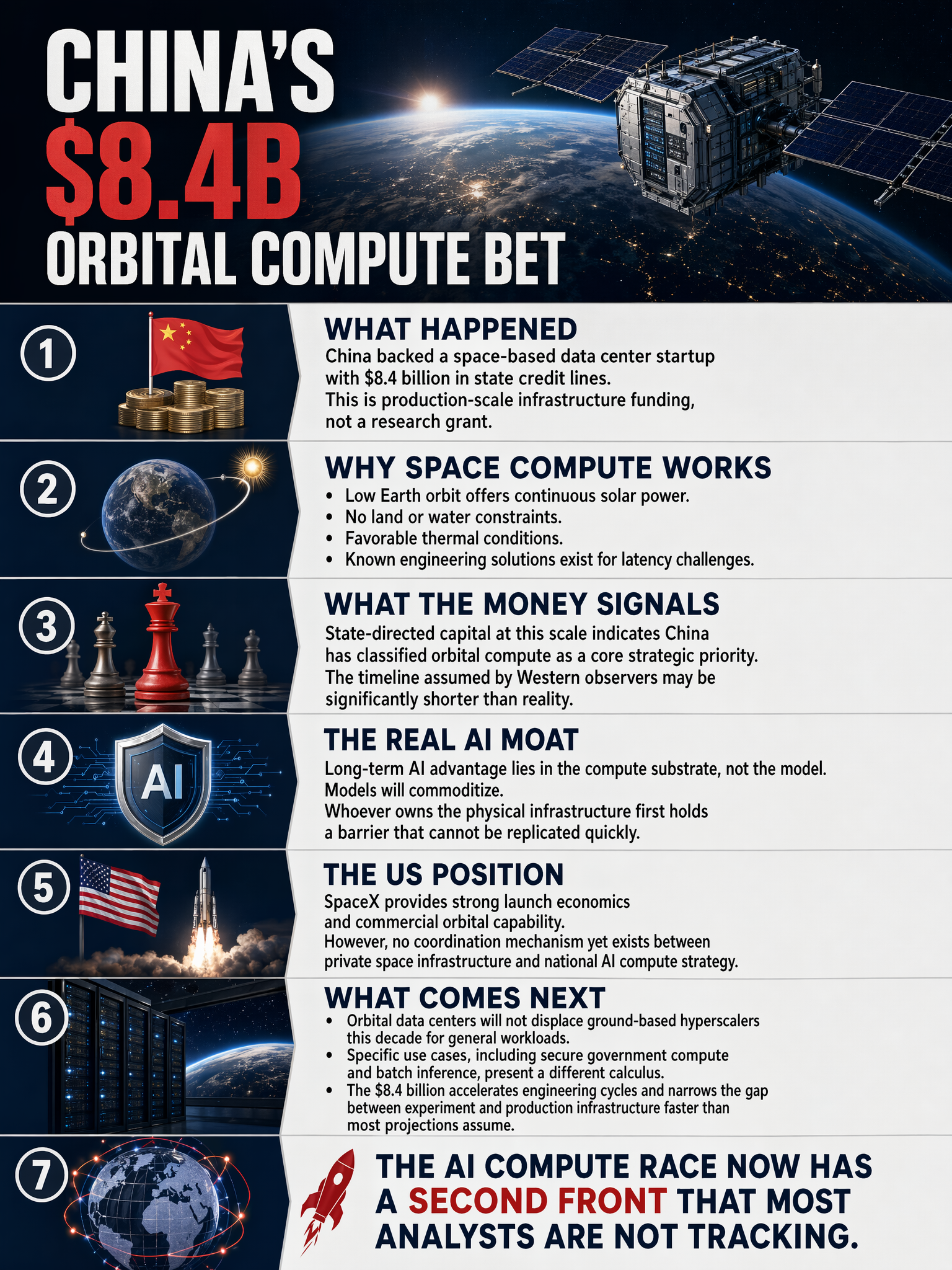

A Chinese orbital data center startup just secured $8.4 billion in credit lines. Government-backed. Space-based. Not a pilot program or a research grant. Eight point four billion dollars to put compute infrastructure into low Earth orbit.

Elon Musk saw the SpaceNews report and posted a single word: “Interesting.” That’s about as close to a tell as you’ll get from someone who runs both a rocket company and an AI lab.

Why Orbital Compute Is a Serious Idea

I want to be clear that this isn’t speculative moonshot territory anymore. The physics actually work in favor of space-based data centers in ways that matter enormously at scale.

In low Earth orbit, solar power is continuous and abundant. No day/night cycle like ground installations deal with. No land acquisition fights, no permitting delays that stretch years, no cooling water sourced from drought-stressed regions. The thermal environment in space is genuinely better for certain workloads than baking processors in a Phoenix desert and fighting the heat every summer.

The latency problem is real but it is a function of orbital altitude and antenna infrastructure, both of which are engineering problems with known solution paths. They’re not physics walls.

What $8.4 Billion Actually Signals

This isn’t a startup doing a seed round with some government interest attached. This is state-directed capital at a scale that says China has decided orbital compute infrastructure is strategic, full stop.

For context, the U.S. has been building out domestic semiconductor and data center capacity through programs like the CHIPS Act. That conversation has been almost entirely ground-based. While U.S. hyperscalers race to build the next desert-adjacent gigawatt campus, China appears to be funding a parallel track that skips the terrestrial bottlenecks entirely.

That’s a different bet. It might not pay off on the same timeline. But the fact that it’s being made at $8.4 billion means they think the timeline is shorter than most Western observers assume.

The Real Long Game in AI

Here’s what I think gets missed in the daily discourse about model benchmarks and API pricing. The competitive moat in AI, long term, is not the model. Models will commoditize faster than anyone is comfortable admitting. The moat is the compute substrate, who owns it, where it sits, and how much it costs to run.

If orbital infrastructure becomes viable at scale, the country that builds it first has a physical compute advantage that is extremely hard to replicate quickly. You can’t just spin up a competing constellation in 18 months. The supply chain, launch cadence, and capital requirements create a natural barrier.

The U.S. Has SpaceX. That’s Not Nothing.

The obvious counterpoint is that the U.S. has Starlink, Starship, and an increasingly mature commercial launch industry. SpaceX has better launch economics than anyone on Earth right now. If orbital data centers become the next frontier, America has the launch infrastructure to compete.

But SpaceX is a private company with its own agenda, not a dedicated government compute platform. The coordination problem between private orbital capability and national AI strategy is real, and nobody has solved it yet.

China appears to be solving that coordination problem by just… funding the whole stack directly. Messy for efficiency, effective for strategic alignment.

Where This Goes

I don’t think orbital data centers replace ground-based hyperscalers within this decade. The economics aren’t there yet for general workloads. But for specific use cases, secure government compute, latency-tolerant batch inference, regions with no viable land-based infrastructure, the calculus looks different.

What matters right now is that a $8.4 billion commitment changes the development trajectory. That capital funds engineering talent, launch contracts, and hardware iteration cycles. In five years, the gap between “interesting experiment” and “production infrastructure” closes faster than most people expect.

The AI compute race has a new front. Most people are still looking at the GPU scorecard.

🛰️

Sources

#AI #SpaceTech #DataCenters #China #ComputeInfrastructure #AIStrategy #MachineLearning