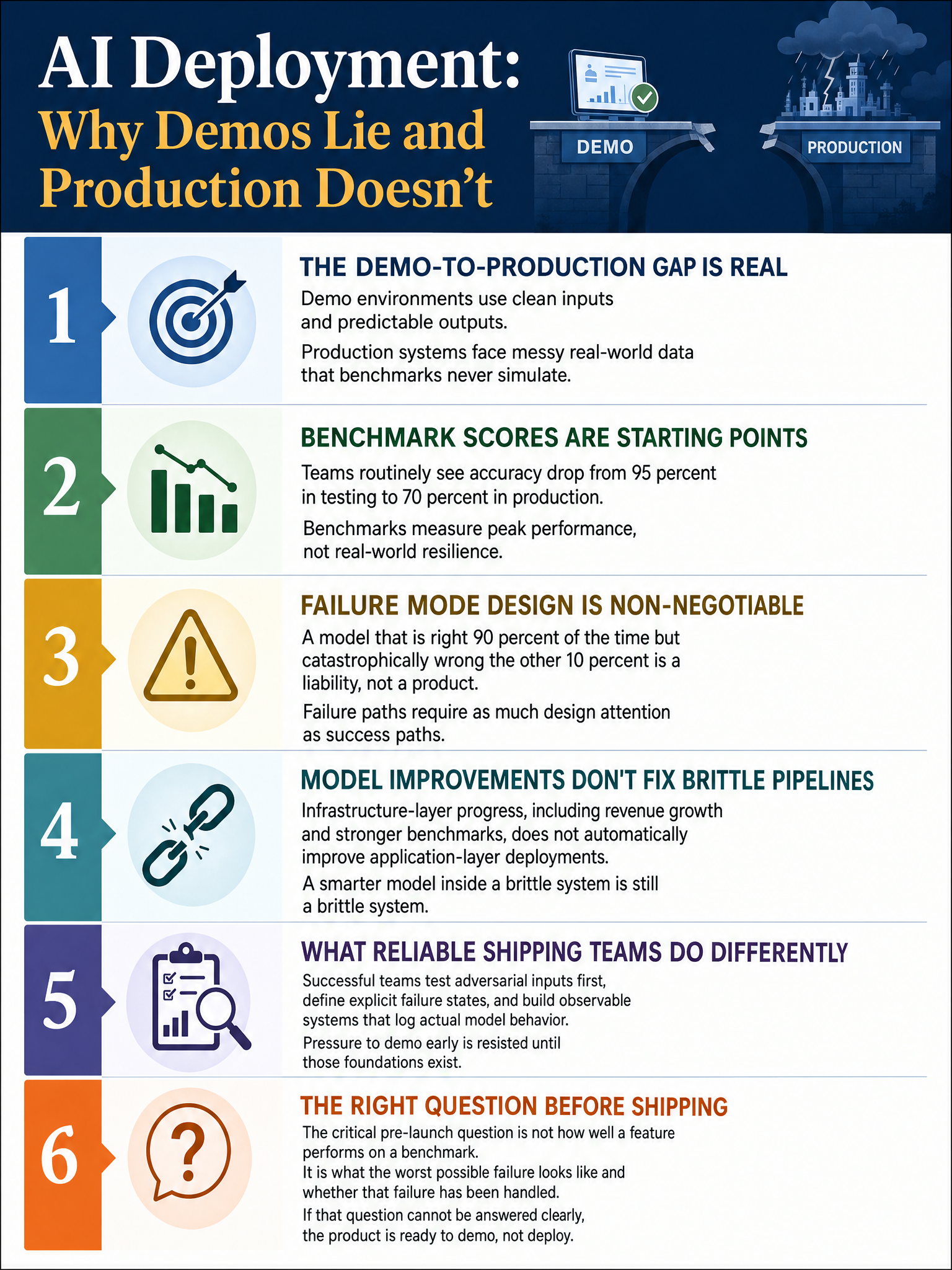

Insight on the gap between AI demos and production deployments, and why failure mode design matters more than benchmark performance

The Demo Worked Great. The Deployment Did Not.

Every few weeks, a new AI agent demo drops and the timeline lights up. Someone ships a polished video, the agent breezes through a 12-step workflow in 90 seconds, and the replies fill with “this changes everything.” I get it. The demos are genuinely impressive sometimes.

Then the actual engineers try to build something real with it. And that is where the quiet failures begin.

The Gap Is Not a Bug, It’s a Design Choice

Demo environments are built for the happy path. Clean inputs. Predictable outputs. No legacy data. No user who entered their address three different ways across four records in the same CRM.

Production is the opposite of that.

I have watched teams spend weeks getting an agent to 95% accuracy on a benchmark, then deploy it against real user data and watch it fall apart at 70%. The benchmark was not lying. The benchmark was just measuring the wrong thing. It was measuring peak performance under ideal conditions, not resilience under the chaos that actual users generate every single day.

That 25-point gap is not a rounding error. It is the difference between a product and a prototype.

Why Failure Mode Design Actually Matters

Here is the thing nobody wants to talk about in the demo reel: what happens when the model is wrong?

If you have not designed for that, your system will fail loudly, in front of users, in ways that destroy trust fast. A model that is right 90% of the time but catastrophically wrong the other 10% is not a 90% accurate model in practice. It is a liability.

The teams that ship successfully are the ones who spent at least as much time designing the failure paths as the success paths. What does the system do when confidence is low? Does it ask for clarification, fall back to a human, log the anomaly, or silently produce garbage? That last option is the one most demos never show you.

Benchmark Performance Is a Starting Point, Not a Finish Line

Anthropic’s CFO Krishna Rao recently appeared on Patrick O’Shaughnessy’s podcast and mentioned that Anthropic’s internal run-rate revenue has grown from roughly $250M two years ago to $30B today. That growth is real and the models are getting genuinely better. But revenue growth at the infrastructure layer does not automatically translate into successful application-layer deployments. The model improving does not mean your production system improves. Those are two separate problems.

I have seen teams treat a better benchmark score as permission to skip the hard work of fault tolerance design. It is not. A smarter model in a brittle pipeline is still a brittle pipeline.

What Actually Closes the Gap

The teams I have seen ship reliably do a few things differently.

They test against adversarial inputs before they test against clean ones. They define explicit failure states with defined behaviors for each. They build observable systems, which means logging what the model actually did, not just whether it succeeded. And they resist the pressure to demo before those foundations exist.

That last one is cultural and it is hard. There is enormous pressure in AI right now to show something impressive quickly. Andrej Karpathy has pointed out publicly that the skill gap in AI is not access to models, it is knowing how to actually use them for real work. I think the same applies to teams building with AI. The gap is not usually the model. It is the engineering discipline around the model.

The Real Question to Ask Before You Ship

Before any AI feature goes to production, the question I now ask first is not “how well does this perform on the benchmark?” It is “what is the worst thing this can do, and have we handled it?”

If you cannot answer that second question clearly, you are not ready to ship. You are ready to demo.

And demos, as we have established, are a different product entirely.

Sources & Further Reading

#AI #MachineLearning #MLEngineering #ProductEngineering #AIDeployment